The jobs also depend on which city you live in. Also when you mean 2 years of experience is it total or relevant experience? A lot of recruiters will look for minimum 2.5 years of relevant experience in splunk.

The harder things would be do you know any additional tools like git or ansible ? Do you have knowledge on splunk ES and ITSI ? Based on this you will have jobs with a really good package and I mean 7 figures. Are you a splunk admin or a splunk developer? Do you have the knowledge of a splunk admin or a splunk developer? Do you know how clustering and multisite architecture works? Have you setup your own clustered splunk environment? Have you created any specific add-ons for accenture on your own ? Have you got your splunk certification done ? These are some of the common things that are asked in splunk interviews and what recruiters look at. This depends on the person entirely and what do they know on splunk. He got so bored sitting in bench the he got his aws certification done and plans to jump after a year. We both have over 3 years of experience and since he has joined accenture he has been on bench and only did 1 project which was to setup Wendy’s splunk architecture. My friend is currently in accenture after we both did our first jump. They have limited amounts of projects with shit load of people on bench. Preconfigured machine learning (ML) anomaly detection jobs setup: We’ll set up preconfigured ML jobs for anomaly detection and review results in Elastic’s Anomaly Explorer.Companies like accenture is a trap. Splunk API access and sourcetype data: I’ll show you how to set up a time-bound token that allows access to the Splunk API and how to capture the Zeek sourcetypes that are needed for the Elastic Agent integration.Įlastic Agent Zeek integration configuration and verification: We’ll use the sourcetypes that were captured from the Splunk deployment to configure the integration, and then we’ll verify that the data is coming in and we’re able to search for the data as well as view the default Zeek dashboard. Let’s break this down into three simple steps. However, the value is not just getting the data into Elastic - the value is that you’ll be able to run built-in anomaly detection jobs on that data with just a few clicks to set up.īefore I get started, it must be noted that pulling data from Splunk’s API is not the most efficient way to get Zeek data into Elastic, but it is a quick and easy way for you to show value to your organization as part of a proof of concept event.

It’s a relatively simple process to ingest data to Elastic via Splunk, and we have documented how to get started with data from Splunk. Now for the fun stuff! I’m going to show you how to use the Elastic Agent Zeek integration to pull in Zeek sourcetypes from your Splunk deployment. However, we do realize the benefit of schema on read as it relates to custom log files and current data onboarding workflows, which is why we have been working on a solution called ES|QL to solve those problems. This common schema will ultimately provide both cost and performance benefits to customers. The goal is to converge ECS and OTel Semantic Conventions into a single open schema for metrics, traces, and now logs that is maintained by OpenTelemetry. It was a revolutionary design at its inception when there was not a common schema for log files, but that has now changed.Įlastic is committing our Elastic Common Schema (ECS) to the OpenTelemetry project. Additionally, the more complex your schema, the more data you search, and the longer you have to wait for the results. The tradeoff that’s realized with schema on read is that it inherently adds latency to the search, and there is a latency penalty that’s incurred on each and every time you search for something. The benefit to the schema on read design principle is that it theoretically allows you to quickly onboard any unstructured data source so that you can search it, analyze the results, and subsequently perform some action.

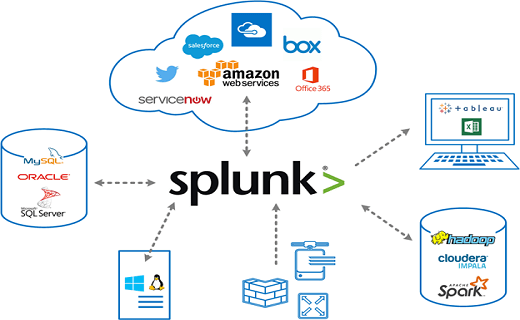

It was built on the design principle of schema on read. Splunk is good at getting data into the platform, specifically unstructured data from log files and API endpoints. Do you think you may have Indicators of Compromise (IOCs) floating around in the sea of your Splunk deployment’s Zeek data? Are you concerned that you may not learn about anomalous behavior until it’s too late? If so, then keep reading to learn how Elastic ® can help - but first, let me explain the history behind this.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed